You tried to scale your crypto system and something broke.

Not the obvious stuff. Like nodes crashing. The quiet kind.

Keys rotting in storage. Compliance gaps showing up six months later. A bug nobody saw coming because it lived in the handshake between old and new protocols.

That’s not growth. That’s gambling.

An Growth Plan Drhcryptology isn’t about adding more servers or speeding up consensus. It’s about lining up research, implementation, compliance, and talent (so) none of them drag the others down.

Most teams skip the hard parts. They ignore cryptographic debt until it screams. They rotate keys on a calendar instead of risk.

They assume regulations won’t shift under their feet (they do).

I’ve seen it twice this year alone (in) decentralized identity pilots and tokenized asset platforms. Both launched clean. Both got hit post-expansion with vulnerabilities rooted in how they planned to grow.

Not whether they could.

You’re not here for theory. You’re an architect, security lead, or product manager. You need steps that work today.

Not slides. Not frameworks. Actual decisions you can make before lunch.

This is that guide.

No fluff. No jargon detours. Just what you actually have to do (and) why skipping any piece will cost you later.

Why DevOps Scaling Breaks Crypto

I’ve watched teams add ten more validator nodes and call it “done.”

It’s not done. It’s broken.

Horizontal scaling multiplies cryptographic surface area. Fast. More nodes mean more key generation attempts.

More chances for distributed ECDSA failures. it regions verifying signatures with slightly different clock times or entropy pools.

You think your time-based tokens are safe? Try syncing them across three AWS zones with 87ms clock skew. (Yeah, I checked.)

Here’s a real win: threshold ECDSA signing across zones. One team did it right. Used HSM-attested quorums, synced entropy sources, rotated keys in lockstep.

Zero signature mismatches after scaling to 42 nodes.

Then there’s the horror story: auto-scaling killed their TLS pinning. New pods loaded old pinned certs. Clients dropped connections.

For two days. Nobody noticed until payments failed.

Three hidden failure modes I see weekly:

Nonce reuse under load

TLS certificate pinning mismatches during auto-scaling

Asymmetric key rotation gaps in stateless services

Drhcryptology taught me this early: Growth Plan Drhcryptology isn’t about more servers. It’s about fewer assumptions.

Auto-scaling groups need synchronized HSM attestation.

Stateless API pods can’t cache private keys (they) need enclave-bound session keys.

You’re not scaling infrastructure. You’re scaling trust. And trust doesn’t auto-scale.

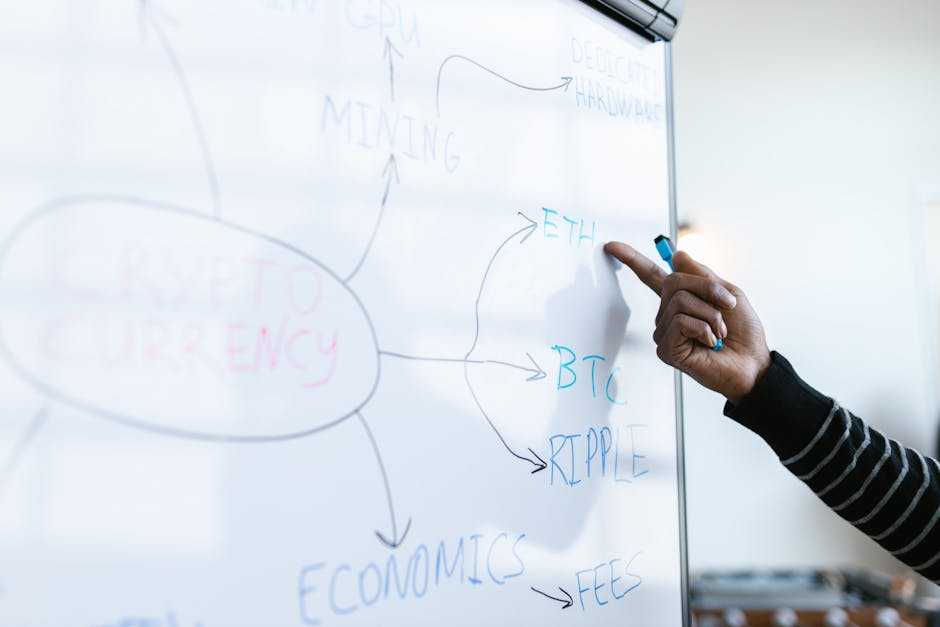

The Four Pillars of Cryptology-Aware Growth

I don’t call it a “roadmap.” I call it a survival checklist.

Pillar 1 is Cryptographic Inventory & Debt Mapping. You must know where crypto lives (and) where it’s rotting. Scan your code with AST tools, then manually tag every crypto use in auth, storage, and APIs.

Found SHA-1 in a login flow? That’s not legacy. That’s liability.

Pillar 2 is Key Lifecycle Governance at Scale. Rotating 100 keys by hand? Fine.

Rotating 10,000? Impossible (unless) you automate with policy enforcement and zero-trust attestation. Every revocation must log why, when, and who approved it.

No exceptions.

Pillar 3 is Protocol-Resilient Architecture. This isn’t about swapping RSA for Kyber next Tuesday. It’s about building negotiation layers that support hybrid X25519 + Kyber key exchange.

And logging every fallback event so you know when quantum risk actually bites.

Pillar 4 is Audit-Ready Operational Evidence. If it’s not logged, timestamped, and verifiable, it didn’t happen. Period.

You’re expanding. That means your crypto hygiene can’t be optional. It’s the foundation.

Growth Plan Drhcryptology fails fast when pillars are treated as theory.

I’ve watched teams ship “post-quantum ready” claims while still using hardcoded AES keys in config files. (Spoiler: that’s not readiness.)

Start with inventory. Then automate. Then negotiate.

Then prove it (all) before launch day.

Don’t wait for the audit request to find out your evidence doesn’t exist.

You can read more about this in Crypto guide drhcryptology.

Compliance Isn’t a Checkbox (It’s) Your Expansion Speed Limit

I’ve watched teams launch in the EU and get blindsided by GDPR key escrow rules. They thought “encryption” was enough. It wasn’t.

Launching in Japan? The FSA requires hardware-backed key storage for wallet services. Not “should have.” Must have.

Skip it, and your service halts at onboarding.

Here’s how I time compliance with growth:

Pre-Expansion: Run a gap analysis. Build your evidence baseline before you file anything. Phase 1: Validate your crypto-agile CI/CD pipeline.

Can it rotate keys without breaking deploys? Phase 2: Get third-party attestation (not) just “we did it,” but “they confirmed it.”

Most people miss cryptographic algorithm agility reporting. Auditors don’t care that you can upgrade from RSA-2048 to RSA-3072. They care that you logged when you did it, why, and who approved it.

This isn’t bureaucracy. It’s proof your system evolves under control.

I embed compliance metadata directly into Terraform configs. Like tagging a Vault policy with fips1403level2 = true. That way, infrastructure changes auto-document themselves.

No spreadsheets. No forgotten tickets.

Growth Plan Drhcryptology fails fast when compliance is bolted on.

It works when it’s baked into every milestone.

If you’re building cross-border crypto systems, this guide walks through real-world agility patterns. Not theory. No fluff.

Just what shipped.

Metrics That Actually Matter in Cryptology

I ignore “nodes online.” It’s noise. You’re not running a dashboard for investors.

Here’s what I track instead:

Mean Time to Cryptographic Incident Response

% of keys rotated within SLA

Algorithm deprecation coverage score

HSM utilization variance

Audit finding density per crypto component

That first one? It’s brutal. But real.

If your team takes 42 hours to respond to a broken signature chain, you’re already compromised. One team cut it to under 11 minutes (just) by adding structured error codes to verification failures and wiring them into tracing. No new service.

Just better logging.

You don’t need custom agents. Parse HashiCorp Vault audit logs. Scrape Prometheus exporters.

Done.

High key rotation frequency means nothing if rotations happen at 3 a.m. without attestation. Or if the old key stays active for 17 extra minutes because someone forgot to flush the cache.

Entropy bottlenecks show up as HSM spikes. Not in Slack alerts. In variance graphs.

Audit finding density tells you where your weakest crypto component lives. Not which team is “slacking.”

This isn’t about reporting. It’s about fixing before the alert fires.

I’ve seen teams chase vanity metrics while their TLS handshake latency crept up unnoticed. Don’t be that team.

If you’re building out a Growth Plan Drhcryptology, start here. Not with dashboards, but with what breaks and how fast you fix it.

For deeper context on how this plays out in live exchange environments, check out the Binance exchange drhcryptology analysis.

Your Keys Are Already Out of Control

I’ve seen it happen. Teams ship fast. Scale hard.

Then—boom. A breach or audit finds keys scattered like loose change.

You think latency will kill your expansion. It won’t. Growth Plan Drhcryptology fails because you don’t know where your keys live.

That inventory isn’t paperwork. It’s your first real line of defense.

You skipped it. I get it. But skipping it means trusting luck instead of proof.

So do this: download (or build) a lightweight crypto inventory template. CSV. Simple validation rules.

Run it on one production service this week.

Not next month. Not after the sprint ends. This week.

Because untracked keys don’t wait for your roadmap.

They wait for attackers.

Start mapping them now.